When an AI modifies a complex system, the risk of regression is proportional to the scale of the intervention. A single misplaced character or an unintended whole file rewrite can break build integrity and introduce subtle bugs. Our research into Precision Bounded Synthesis explores a Least Privilege model for autonomous intervention, moving away from destructive modifications toward targeted, symbol aware synthesis.

The Precision Protocol and AST Validation

We have developed a suite of methodologies for high precision operations that enforce strict boundaries on every intervention. Our synthesis engine is built on a foundation of abstract syntax tree awareness. Every proposed edit is first parsed and validated in a virtual environment to ensure that the resulting code is syntactically correct and architecturally valid. This prevents the "broken build" syndrome common in basic autonomous loops where the agent produces invalid or malformed code.

Our synthesis engine supports several primary modes of operation, each designed for maximum precision:

- Context anchored matching: Ensuring that any modification is linked to a unique character perfect anchor. This prevents the edit from being applied if the file has shifted, providing a critical safety check against stale state.

- Structural symbolic refactoring: Utilizing syntax aware logic to identify and update all instances of a symbol simultaneously. This ensures that renames and signature changes are applied globally within a file, maintaining architectural integrity.

- Bounded scope interventions: Implementing strict limits on the scale of any single edit. By capping the modification volume, we prevent the agent from accidentally overwriting unrelated logic or formatting.

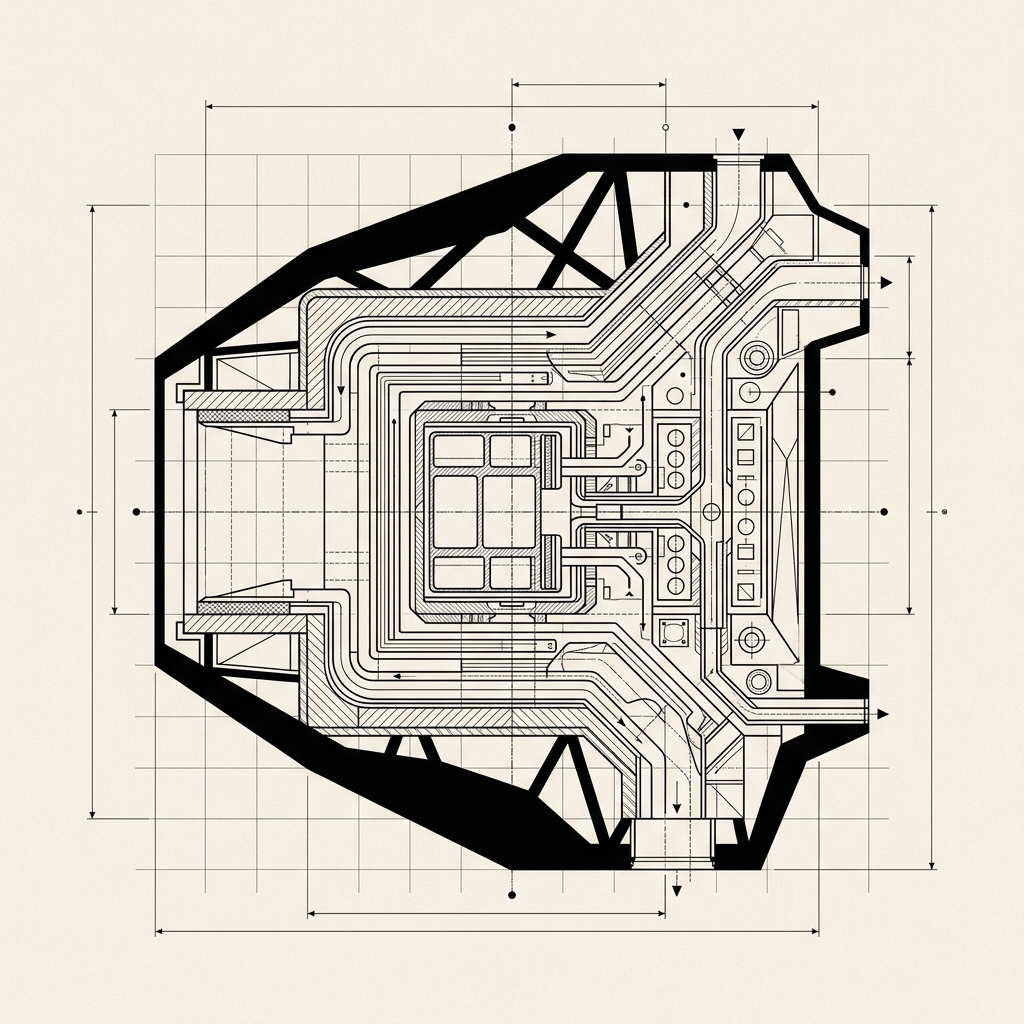

Figure 5: The precision bounded synthesis model, showing how modifications are restricted to specific symbolic anchors to minimize project noise.

Regression Avoidance and Diff Minimization

By using these precision protocols, we can achieve a significant reduction in modification noise. In our benchmarks, surgical mutations resulted in diffs that were 80% smaller than those produced by standard whole file replacement models. This minimization is not just about aesthetics: it is about safety. Smaller diffs are easier for human developers to review and less likely to introduce unintended side effects. It ensures that unrelated logic, formatting, and comments remain pristine, preserving the project's original intent and history.

Furthermore, our engine tracks the "integrity score" of every edit. If a proposed change would significantly degrade the maintainability or readability of the code, the synthesis engine can autonomously reject the edit and request a more refined approach from the orchestration layer. This creates a feedback loop where the quality of the output is prioritized over the speed of execution.

Safety Guardrails and Monitoring Heuristics

Beyond the interventions themselves, our research focuses on session level safety. We have implemented monitoring heuristics that track the rate and nature of modifications. If the system begins to exhibit behaviors that exceed safe thresholds: such as attempting to modify a large number of components without a verified architectural contract: the session is immediately suspended. This "panic button" logic is critical for production environments where an autonomous agent could otherwise cause significant damage if it enters a failure state.

This safety critical framework ensures that the system remains a helpful partner. By treating every modification as a high precision architectural operation rather than a creative rewrite, we achieve the level of consistency and safety required for large scale production environments. Our future work in this area involves developing "impact prediction" models that can estimate the risk of a proposed change before it is ever applied to the codebase.